AI Executive Talking Points Explained

The New Executive Playbook: Transitioning Enterprise AI from Experimental Hobby to Appreciating Asset

Introduction: The End of the Artificial Intelligence Honeymoon

The enterprise technology landscape has definitively exited the experimental honeymoon phase of generative artificial intelligence. For the past twenty-four months, corporate boardrooms and technology forums have been saturated with speculative excitement, fueled by impressive demonstrations and isolated pilot programs. However, a stark divergence has now emerged between organizations that treat artificial intelligence as a novel technological toy and those engineering it as a core driver of institutional value. The contemporary dialogue across elite engineering forums, financial earnings calls, and strategic planning sessions indicates a systemic shift. Executive mandates are no longer concerned with whether a generative model can produce human-like text; they are entirely focused on operational integration, algorithmic governance, and demonstrable financial leverage.

This exhaustive analysis deconstructs the critical executive imperatives that define the modern artificial intelligence maturity curve, synthesized from twenty-six specific, high-level directives dominating current industry discourse. These imperatives represent a cohesive, interdependent framework spanning strategic economics, infrastructure readiness, architectural execution, risk mitigation, and human capital transformation. By analyzing these mandates through the lens of current market dynamics, software engineering realities, and emerging regulatory frameworks, a clear roadmap emerges for the modern enterprise. The transition requires a fundamental restructuring of how organizations view data, compute, talent, and accountability.

Part I: The Economics of Intent and Asymmetric Competition

The strategic integration of artificial intelligence necessitates a departure from traditional software-as-a-service (SaaS) procurement models. Artificial intelligence is not merely a tool; its economic profile requires a fundamentally different approach to capital allocation and competitive positioning.

The Eradication of Pilot Purgatory

The enterprise ecosystem is currently plagued by what industry analysts and developers on forums like Hacker News routinely term "pilot purgatory." The prevailing executive sentiment is absolute: an artificial intelligence pilot without a mathematical path to profit and loss (P&L) impact is indistinguishable from a corporate hobby. During the initial wave of generative model enthusiasm, organizations funded countless proofs-of-concept—chatbots that summarized documents or generated marketing copy. While technically impressive, these disjointed deployments failed to connect to core revenue engines or operational cost centers.

The contemporary boardroom discussion has shifted strictly toward unit economics. A pilot is only authorized if it demonstrates a verifiable mechanism for reducing operational expenditure or accelerating revenue velocity at scale. If a prototype cannot map its outputs directly to a financial ledger, it is abandoned. This ruthless focus on P&L integration forces engineering teams to build solutions that solve actual business constraints rather than simply showcasing foundational model capabilities. In recent earnings calls, Chief Financial Officers have aggressively cut funding for internal "science projects," redirecting capital only to deployments with a modeled return on investment within two quarters.

Capital Allocation: Return on Investment as a Strategic Weapon

When executed with architectural rigor, the financial leverage of artificial intelligence is unprecedented. The current executive calculus views artificial intelligence not as an experimental cost center, but as a strategic weapon. A foundational investment of five million dollars in data pipelining and model orchestration that yields forty-five million dollars in captured value fundamentally alters the corporate balance sheet.

This magnitude of return on investment is dominating discussions in private equity circles and venture capital boardrooms. The focus has moved from minimizing the compute costs of inference to maximizing the asymmetric returns of automation. Organizations that successfully deploy these high-leverage systems effectively weaponize their capital, generating outsized margins that competitors bound by traditional human-capital constraints cannot match. This dynamic transforms artificial intelligence infrastructure from an IT line item into the primary driver of enterprise valuation, allowing early movers to aggressively acquire market share while their competitors are still calculating basic implementation costs.

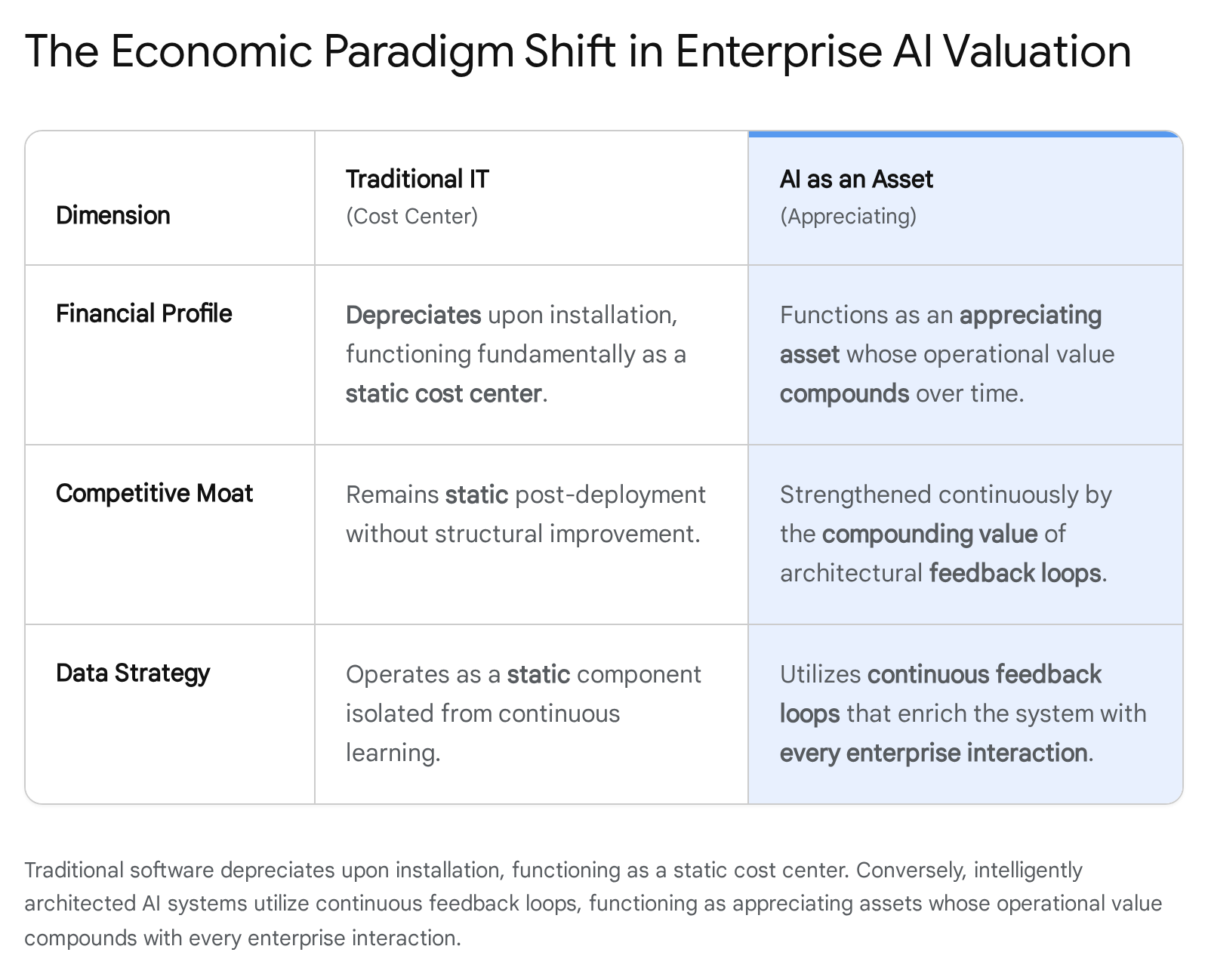

The Appreciation of the Algorithmic Asset

Unlike traditional enterprise software, which immediately begins depreciating upon installation, effectively deployed artificial intelligence is an appreciating asset. The executive mandate dictates that AI isn't a tool; every interaction makes it smarter. This paradigm shift is frequently debated in strategic management literature and technical community discussions regarding reinforcement learning from human feedback (RLHF) and dynamic vector stores.

An enterprise model is not a static binary executable; every interaction, correction, and successful task execution generates proprietary telemetry data that is fed back into the system. As the workforce interacts with the model, the model continuously refines its behavioral weights and contextual understanding. Therefore, the system is fundamentally more capable, accurate, and valuable on its hundredth day of deployment than on its first. This asset appreciation creates an insurmountable moat for early adopters. Competitors who attempt to enter the market later cannot simply buy the same foundation model and achieve parity; they are years behind in the proprietary human-in-the-loop feedback cycles that define the model's institutional memory and operational efficacy.

Capturing Intent Versus Cutting Costs

The dominant competitive strategy regarding artificial intelligence has bifurcated into two distinct philosophies: the race to the bottom and the race to the top. The executive summary of this dynamic is stark: "They cut costs. We own intent." The majority of legacy enterprises deploy automation defensively, utilizing models to trim operational expenditures, reduce headcount in tier-one customer support, and optimize back-office workflows. While cost-cutting provides immediate margin relief, it is a finite strategy that rapidly commoditizes.

Conversely, market leaders utilize artificial intelligence offensively to own customer intent. By deploying predictive agents that analyze real-time behavioral data, these organizations anticipate consumer needs before they are explicitly articulated. The boardroom discourse highlights that whoever owns the predictive intent captures the primary value in the supply chain. Defending the bottom line through cost reductions is a survival tactic; expanding the top line through predictive intent architecture is the strategy for market dominance.

The Strategic Calculus of Buy Versus Build

The debate between buying off-the-shelf software and building custom solutions has been completely redefined by the generative model era. The current executive mandate is to buy the platform, but build the moat, recognizing that enterprise data is the ultimate differentiator. The foundational intelligence—the massive neural networks trained on exabytes of public data—is rapidly commoditizing, with open-weights models rivaling proprietary API endpoints.

It is a severe misallocation of capital for an enterprise to attempt to build a foundation model from scratch. Instead, organizations must procure the base platform capabilities through commercial APIs or robust open-source alternatives. The true competitive differentiator, the protective moat, is built entirely upon proprietary enterprise data. Discussions among chief technology officers consistently emphasize that the underlying large language model is interchangeable; the unique value is generated by how seamlessly that model is integrated with the organization's unique historical data, internal workflows, and customer interaction telemetry.

The Talent Deficit: Translating Python to Profit

The primary bottleneck in enterprise execution is not a lack of available computing power; it is a severe talent deficit. The specific requirement is not just engineering prowess, but business fluency: organizations need a specialized cohort of "AI Translators" who speak both Python and P&L.

A hundred brilliant data scientists operating in isolation will produce fascinating research that generates zero revenue. Conversely, a hundred business analysts operating without technical understanding will request impossible, un-scalable magic from the engineering team. The AI Translator bridges this gap. Discussions on professional networks like Blind and LinkedIn highlight a massive compensation premium for product managers and technical leads who can map the statistical capabilities of vector embeddings directly to the financial imperatives of the enterprise. These individuals ensure that every line of code deployed is mathematically tethered to business value, transforming technical potential into financial reality.

| Strategic Domain | Traditional Approach | AI-Native Approach | Enterprise Impact |

|---|---|---|---|

| Project Funding | Experimental Pilots | P&L Mandated Deployments | Eliminates "hobbyist" capital drain. |

| Capital Goal | Margin Protection (Cost Cutting) | Owning Customer Intent | Shifts focus from bottom-line defense to top-line growth. |

| Asset Lifecycle | Software Depreciates | Algorithms Appreciate | Creates compounding competitive advantages via RLHF. |

| Talent Acquisition | Siloed Engineers vs. Analysts | Bilingual "AI Translators" | Aligns model architecture with unit economics. |

Part II: The Data Architecture and Infrastructure Imperative

The realization of the economic potential described in Part I is entirely dependent on the underlying technological infrastructure. An aggressive artificial intelligence strategy layered over antiquated data architecture is a recipe for systemic failure.

Paving the Dirt Road of Technical Debt

Executive leadership frequently attempts to deploy cutting-edge, high-frequency algorithmic systems onto legacy infrastructure built for batch processing and relational queries. This approach is universally recognized in engineering communities as putting a Ferrari on a dirt road. The executive directive is clear: pave first.

Sophisticated multi-agent models require massive, continuous streams of structured and unstructured data, operating at sub-second latencies. Legacy architectures, burdened by decades of technical debt, point-to-point API integrations, and siloed data warehouses, physically cannot support the required throughput. Before deploying advanced generative models, organizations must execute a ruthless modernization of data pipelines. This entails transitioning to event-driven architectures, adopting specialized vector databases, and untangling legacy dependencies. The failure to address technical debt prior to artificial intelligence deployment results in fragile systems that collapse under load, generating immense frustration and negative return on investment.

Refining Digital Oil from Inert Data

A pervasive misconception within the enterprise is that possessing massive volumes of customer data automatically translates to artificial intelligence readiness. As debated extensively in data science forums and Reddit's r/MachineLearning, raw loyalty data, transaction logs, and customer profiles are fundamentally inert. They represent potential energy, but they do not execute actions.

The strategic mandate recognizes that raw data must be actively refined. Artificial intelligence models—specifically embedding models and generative frameworks—act as the refinery, transforming inert rows in a database into predictive, actionable intelligence, often referred to as 'digital oil'. This refinement process involves structuring unstructured text, generating semantic embeddings, and creating real-time feature stores that models can access instantaneously. Without the algorithmic refinery, the enterprise is merely hoarding data, incurring storage costs without generating insights; with it, the organization fuels its predictive engines.

The Physics of Latency and Conversion

In the context of artificial intelligence deployment, latency is not merely an engineering metric; it is the ultimate determinant of commercial viability. The physics of digital interaction dictate a brutal reality recognized in executive circles: a one-second delay in response time correlates directly to a measurable drop in user conversion, frequently cited as approaching seven percent.

When artificial intelligence is deployed in customer-facing scenarios or as real-time coaching for sales representatives, the cognitive window is exceptionally narrow. The system must ingest context, query the vector database, generate a response, and deliver the intervention in under two hundred milliseconds. If an agent takes three seconds to stream a response, the human user abandons the interaction. Consequently, infrastructure architecture must prioritize inference speed. Engineering teams are forced to utilize techniques like model quantization, speculative decoding, edge computing, and optimized API routing to ensure that the artificial intelligence operates at the speed of human thought.

The Fallacy of Perfect Data Versus Iterative Velocity

A recurring pathology in corporate data strategy is the pursuit of absolute data perfection. Organizations often delay artificial intelligence deployments for years while attempting massive Master Data Management (MDM) overhauls, striving to clean and categorize every legacy record. The modern consensus among top-tier data architects is that 'perfect data' costs significantly more in lost time and market share than deploying with 'good enough' data coupled with rapid iterating.

Generative models are inherently robust at handling unstructured and noisy inputs. The optimal strategy favors speed and iteration. By deploying models on sufficiently clean datasets and establishing robust telemetry, organizations can identify which data actually impacts performance. The feedback loop from the iterative deployment guides subsequent data cleaning efforts, ensuring that capital is spent only on refining the data that demonstrably improves the model's predictive accuracy, rather than engaging in endless, theoretical data sanitization projects that delay time-to-market.

Orchestration and the Mitigation of Vendor Lock-in

The rapid evolution of foundation models creates a profound systemic risk: vendor lock-in. If an enterprise deeply integrates proprietary model APIs directly into its core applications, it becomes entirely beholden to a single provider's pricing, deprecation schedules, and performance fluctuations.

The strategic directive is clear: do not marry a single model. By implementing robust orchestration frameworks—such as advanced iterations of LangChain, LlamaIndex, or proprietary internal gateway routers—the enterprise abstracts the underlying model from the application logic. This orchestration allows the organization to seamlessly swap the 'brains' of the operation based on task requirements, inference cost, or the release of a superior open-weight model. A query regarding complex legal reasoning might be routed to a heavy, proprietary frontier model, while a simple summarization task is routed to a highly efficient, fine-tuned model running locally. This architecture guarantees institutional sovereignty over the artificial intelligence infrastructure.

Part III: Architectural Execution: From Copilots to Autonomous Agents

The architectural methodologies deployed over the next decade will determine which enterprises thrive and which become obsolete. The focus has entirely shifted from how a model is trained from scratch to how a model is grounded, constrained, and empowered to execute logic.

The Real-Time Supremacy of Retrieval-Augmented Generation (RAG)

One of the most critical corrections in enterprise strategy involves the methodology for injecting proprietary knowledge into foundation models. Initially, organizations attempted to fine-tune large language models on their internal documents—a computationally expensive, fragile process that resulted in static models prone to immediate obsolescence.

The definitive architectural standard has shifted: "Don't train AI. Give it a library card for real-time lookup." This refers to Retrieval-Augmented Generation (RAG). When a query is initiated, the RAG architecture searches a vector database for the most current, relevant proprietary information, retrieves the exact semantic context, and provides it to the generative model to synthesize the final answer. This separation of reasoning (the model) from knowledge (the database) guarantees that the artificial intelligence always operates on real-time data, drastically reduces compute costs, and allows for strict enterprise access control and permissioning.

Demystifying and Eliminating Hallucinations

The phenomenon of 'hallucinations'—where a model generates plausible but factually incorrect information—was long considered the fatal flaw of generative artificial intelligence. However, the discourse among lead engineers and product managers has fundamentally reframed this issue. A hallucination is no longer viewed as an inherent, unsolvable mystery of the neural network; it is categorized simply as a bug in a chatbot or a configuration error in an Enterprise Agent.

In a properly designed enterprise system utilizing strict RAG constraints, low-temperature generation settings, and grounding mechanisms, the model is mathematically prohibited from generating facts outside the provided context window. If a production agent hallucinates, it is treated as a failure of the retrieval pipeline to provide the correct context, or a failure of the system prompt to enforce strict boundaries. By treating hallucinations as standard software bugs requiring architectural solutions rather than philosophical dilemmas, enterprises have effectively declared the accuracy problem solvable in production environments.

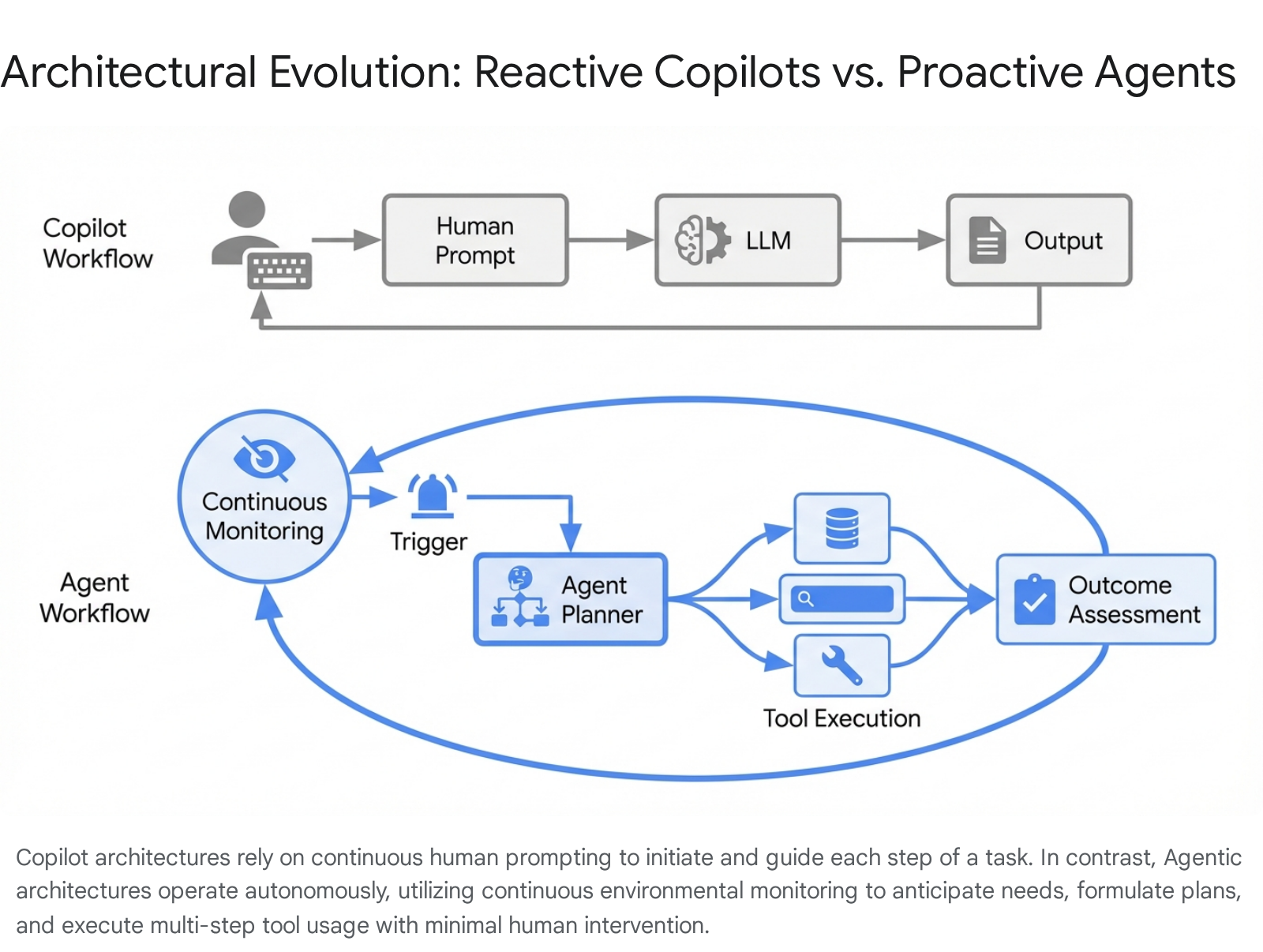

The Autonomous Transition: From Copilots to Agents

The most financially consequential architectural shift currently underway is the transition from copilot frameworks to autonomous agents. A copilot is fundamentally reactive; it is a sophisticated autocomplete that waits for human prompting, requiring continuous human supervision to advance a task.

While copilots offer incremental productivity gains, the true leverage lies in agentic workflows. An agent anticipates. It is equipped with a goal, access to tools, and the autonomy to plan and execute multi-step processes without continuous human intervention. Boardrooms are classifying this transition as a billion-dollar shift in enterprise margin. For example, rather than a human asking a copilot to draft an email to a churn-risk client, an autonomous agent monitors product usage telemetry, identifies the churn risk, generates the personalized intervention strategy, drafts the communication, and executes the workflow through the CRM system, only escalating to a human if predefined risk thresholds are exceeded. This shift from reactive assistance to proactive execution represents the realization of artificial intelligence as an independent digital workforce.

The Cognitive Chief of Staff

When viewing productivity through the lens of agentic architecture, the conceptualization of the technology must evolve. Executive messaging stresses that enterprise AI is not merely a sophisticated search bar designed for information retrieval; it is a cognitive "Chief of Staff" deployed specifically for the automation of low-value cognitive labor.

Throughout the enterprise, highly compensated professionals spend immense portions of their day on administrative overhead—scheduling, data aggregation, initial drafting, and status reporting. The architectural mandate is to deploy agents that seamlessly handle this volume of tedious digital administration. By offloading the mechanical processing of information, the human workforce is freed to dedicate their entire cognitive capacity to high-value strategic judgment, relationship building, and complex problem-solving. This redesign of workflows maximizes the return on human capital, transforming how middle management operates.

Part IV: Risk, Governance, and Fiduciary Shielding

As artificial intelligence moves from advisory roles to autonomous execution, the risk profile of the enterprise shifts dramatically. Governance can no longer be a secondary compliance function; it must be engineered directly into the runtime environment.

Algorithmic Scripts and Millisecond Auditing

Traditional enterprise risk management relies on post-hoc auditing—reviewing financial transactions or employee actions at the end of the month or quarter. This paradigm is fundamentally incompatible with algorithmic execution. As noted in executive risk assessments, an artificial intelligence agent is not a human with a corporate credit card who might make a mistake and be reprimanded later; it is an algorithmic script executing thousands of actions per minute.

If an autonomous pricing agent contains a flawed heuristic, the damage is not contained to a single transaction; it scales exponentially across the entire customer base in seconds. Therefore, auditing must transition from a retrospective human activity to a real-time, algorithmic necessity. The system must be designed to audit its own outputs in milliseconds against predefined policy parameters before the action is executed in the live environment.

The Algorithmic Circuit Breaker

The velocity of agentic execution introduces severe systemic vulnerabilities, particularly in financial, supply chain, or automated margin-management environments. Operating on stale data is a catastrophic risk. The boardroom maxim is blunt: relying on four-hour-old margin data for automated execution can result in bankruptcy in four minutes.

This reality mandates the implementation of hard algorithmic circuit breakers. These are non-negotiable, deterministic code structures that constantly monitor data freshness, execution velocity, and variance from expected outcomes. If the artificial intelligence behaves erratically, experiences a sudden spike in latency, or attempts actions outside tight confidence intervals, the circuit breaker severs the model's access to production systems instantaneously, reverting operations to human control or safe-mode defaults to prevent runaway execution.

Fiduciary Duty: The Sentinel and the Shield

Deploying artificial intelligence without rigorous, embedded guardrails is increasingly viewed as a direct violation of executive fiduciary duty. The integration of advanced cyber-security protocols is paramount. The boardroom consensus is absolute: security platforms acting as a sentinel (monitoring behavior) and a shield (defending against malicious inputs) are the prerequisite for any autonomous deployment. Without this runtime security, the enterprise is exposed to catastrophic liability.

Board-Level Accountability and the OAIG

The governance of enterprise artificial intelligence cannot be buried deep within the traditional Information Technology hierarchy. It requires the establishment of an independent, highly empowered entity, often conceptualized as the Office of Artificial Intelligence Governance (OAIG). The OAIG must report directly to the Chief Executive Officer, ensuring board-level visibility and preventing the obfuscation of algorithmic risks by mid-level engineering managers prioritizing release velocity over systemic safety.

Transparency, Liability, and the Chief Executive's Burden

The era of "black box" artificial intelligence deployments is over, driven by both legal precedent and regulatory pressure. The liability framework regarding algorithmic action is rapidly solidifying in the courts. If an enterprise artificial intelligence discriminates against a protected class in loan originations or automated hiring algorithms, the legal and public accountability bypasses the machine learning engineer who trained the model. The directive is severe: if the AI discriminates, the CEO testifies, not the engineer.

The organization cannot claim ignorance of its own digital proxy. This transfers the burden of explainability from a nice-to-have technical feature to a hard legal requirement. The operational rule is definitive: if the engineering team cannot explain the deterministic path of the model's decision-making process to a federal regulator or a judge, the model cannot be shipped into production. Transparency architecture—utilizing techniques like attention mapping and logic tracing—must be built concurrently with the model itself.

The Regulatory Moat of the European Union Artificial Intelligence Act

While many technology sectors view the stringent regulations of the European Union Artificial Intelligence Act as a bureaucratic barrier to innovation, astute executive leadership views it as a strategic opportunity. The Act enforces rigorous requirements for risk assessment, transparency, and human oversight for high-risk applications.

Rather than retreating from these jurisdictions, market leaders are actively engineering their systems to exceed these compliance standards globally. By embracing the highest regulatory bar, these organizations transform the EU AI Act into a competitive moat for trusted brands. Smaller competitors or less disciplined organizations will lack the infrastructure, capital, and governance required to meet these evidentiary burdens. Consequently, the trusted enterprise brand that can definitively prove the safety, auditability, and fairness of its algorithms will monopolize institutional contracts, leaving non-compliant innovators locked out of the most lucrative markets.

| Governance Domain | Legacy IT Posture | Modern AI Mandate | Executive Consequence |

|---|---|---|---|

| Auditing | Post-hoc Monthly Review | Millisecond Runtime Auditing | Prevents algorithmic runaway and catastrophic scale of errors. |

| System Fail-safes | IT Helpdesk Tickets | Hard Algorithmic Circuit Breakers | Protects against automated execution on stale data. |

| Reporting Structure | Buried in IT Department | OAIG Reporting to CEO | Ensures board-level visibility and alignment of risk appetite. |

| Legal Liability | Engineer/Vendor Blame | CEO Testifies | Demands radical transparency and explainability before shipping. |

Part V: The Human Element: Talent, Adoption, and Enduring Culture

The ultimate success of an artificial intelligence transformation is rarely dictated by the capability of the underlying neural networks; it is almost entirely determined by the organization's ability to manage the human-machine interface.

Human Value in the Age of Volume

As autonomous agents assume responsibility for the vast majority of routine cognitive tasks, the enterprise must intentionally redesign its workforce around a new division of labor. The design philosophy is explicitly defined in workforce strategy sessions: artificial intelligence handles volume; humans handle judgment.

The machine is vastly superior at parsing tens of thousands of documents, identifying statistical anomalies, and executing repetitive digital workflows. However, the machine entirely lacks ethical reasoning, empathy, strategic nuance, and the ability to navigate complex human relationships. Organizations must redesign job descriptions to amplify these uniquely human traits. Instead of compensating employees for the volume of data they process, the enterprise must compensate them for the quality of judgment they apply to the insights generated by the algorithmic volume.

Adoption and the Friction of Change Management

The most significant risk to an enterprise artificial intelligence deployment is never the underlying technology; it is the friction of change management. If an advanced agentic workflow is deployed into an organization that is psychologically unprepared, the workforce will actively reject it. This rejection manifests as subtle sabotage, refusal to trust the system outputs, and a reversion to manual legacy processes.

Overcoming this friction requires a relentless internal messaging campaign focused on augmentation rather than replacement. Employees must be trained not just on how to use the software, but on how to manage their new digital subordinates. Adoption metrics must be tracked as rigorously as latency or accuracy. If the human workforce views the artificial intelligence as a competitor, the deployment will stall; if they view it as a lever to multiply their own output and value, it will scale exponentially.

The Reality of Job Security

The anxiety surrounding technological unemployment is pervasive in the broader social discourse and across professional forums. However, the operational reality within the modern enterprise is far more nuanced and competitive. The blunt truth communicated in strategic workforce planning is that artificial intelligence, as a standalone entity, will not replace the modern knowledge worker. However, a professional who utilizes artificial intelligence effectively will absolutely replace a professional who refuses to adapt.

The required skill set is shifting rapidly from pure information retrieval and manual execution to algorithmic management, prompt engineering, and complex systems oversight. Job security in the modern era is entirely dependent on an individual's willingness to integrate these new capabilities into their daily workflow, transitioning from a manual individual contributor to a manager of an automated portfolio.

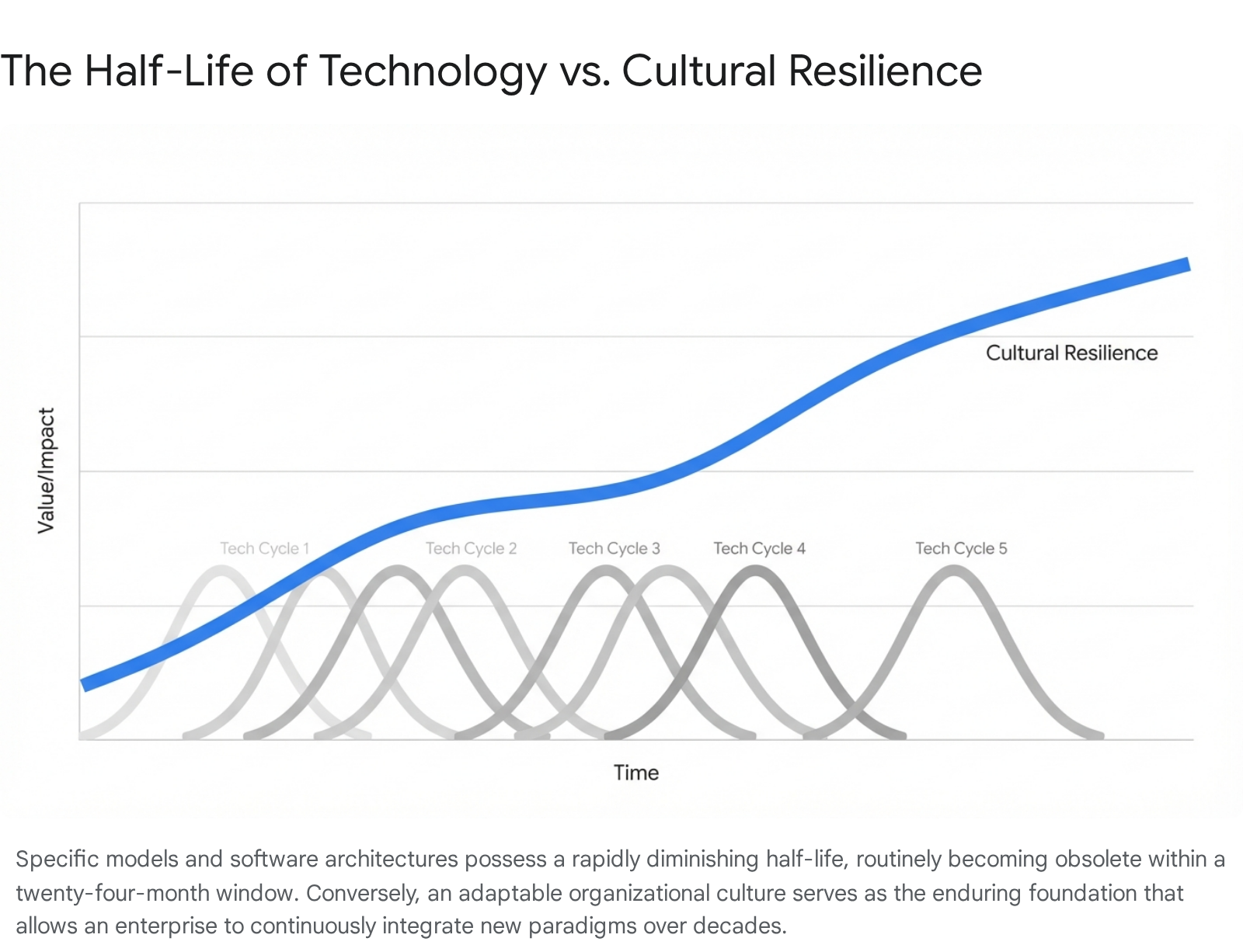

Institutional Resilience: The Enduring Power of Culture

In an era of unprecedented technological velocity, a profound paradox emerges. The half-life of technical skills and specific software stacks is collapsing. The cutting-edge foundational model deployed today will inevitably decay into obsolescence within twenty-four months, superseded by a more efficient, capable, and multimodal architecture.

Therefore, building an enterprise strategy solely around a specific piece of technology is structurally unsound. The only enduring competitive advantage is the organizational culture. While the technology decays in months, a culture of relentless adaptability, intellectual curiosity, and rigorous execution endures for decades. The most successful enterprises do not merely train their employees on the latest artificial intelligence tools; they cultivate a psychological resilience that allows the organization to absorb, integrate, and exploit continuous disruption without losing operational coherence.

Conclusion: The Final Transition

The twenty-six executive mandates analyzed throughout this report represent the demarcation line between the legacy enterprise and the artificial intelligence-native organization. The transition is not merely a software upgrade; it is a fundamental restructuring of corporate mechanics. It demands the cessation of isolated, unprofitable pilot programs in favor of high-leverage algorithmic deployments that demonstrably expand profit margins. It requires the modernization of underlying data infrastructures to support the brutal latency and throughput demands of autonomous agents. It necessitates the implementation of military-grade security protocols, robust algorithmic circuit breakers, and an independent Office of Artificial Intelligence Governance that reports directly to the highest levels of corporate leadership.

Above all, this transition demands a profound evolution in human capital management. The enterprise must bridge the gap between technical engineering and strategic finance through specialized talent, intentionally divide labor between machine volume and human judgment, and cultivate an enduring culture of adaptability. Organizations that successfully synthesize these complex economic, architectural, and human elements will transform artificial intelligence from a chaotic, experimental risk into an appreciating, autonomous asset, establishing a competitive moat that will define the trajectory of their industry for decades to come.

Executive Talking Points (26) — Quick Reference

This report is curated based on the following high-level directives dominating current industry discourse:

- ON PILOTS: "No path to P&L? It's a hobby."

- ON TALENT: "100 AI Translators who speak Python and P&L."

- ON SPEED: "'Perfect data' costs more than 'good enough' + iterating."

- ON COMPETITION: "They cut costs. We own intent. Bottom vs top."

- ON DATA: "Loyalty data is inert. AI refines into 'Digital Oil'."

- ON RISK: "Not a credit card — a script. Audited in milliseconds."

- ON HALLUCINATIONS: "Bug in chatbot. Config error in Enterprise Agent. Solved."

- ON TECH DEBT: "Ferrari on a dirt road. Pave first."

- ON CIRCUIT BREAKER: "4hr-old margin data = bankrupt in 4 minutes."

- ON VENDOR LOCK-IN: "Don't marry one model. Orchestration to swap brains."

- ON LATENCY: "1s delay = 7% conversion drop. Coaching in <200ms."

- ON RAG: "Don't train AI. Give it a library card for real-time lookup."

- ON PRODUCTIVITY: "Not a search bar — a Chief of Staff for low-value cognitive labor."

- ON ADOPTION: "Biggest risk isn't tech — it's change management. Augmentation, not replacement."

- ON AGENTS: "Copilot waits. Agent anticipates. That transition = $1B in margin."

- ON SAFETY: "AI without guardrails is a liability. Sentinel + Shield = fiduciary duty."

- ON HUMAN VALUE: "AI handles volume. Humans handle judgment. That's the design."

- ON GOVERNANCE: "OAIG under the CEO, not buried in IT. Board-level accountability."

- ON LIABILITY: "If the AI discriminates, the CEO testifies. Not the engineer."

- ON TRANSPARENCY: "If you can't explain it to a regulator, don't ship it."

- ON REGULATION: "EU AI Act isn't a barrier — it's a competitive moat for trusted brands."

- ON JOB SECURITY: "AI won't replace you. Someone using AI will."

- ON CULTURE: "Tech decays in 24 months. Culture endures for decades."

- ON ROI: "$5M invested to capture $45M. That's not a cost center — that's a weapon."

- ON BUY VS BUILD: "Buy the platform. Build the moat. Your data is the differentiator."

- ON AI AS ASSET: "AI isn't a tool. It's an appreciating asset. Every interaction makes it smarter."