Responsible AI Governance and Adoption

Strategic Blueprint: Global Governance Architectures and Organizational Adoption Roadmaps for 2026

The rapid proliferation of artificial intelligence has propelled the global economy into a state of profound technological and regulatory transition. As models evolve from experimental generative tools into autonomous, agentic systems deeply integrated into core business and civic infrastructures, the historical margin for error has completely vanished. At the beginning of 2026, the prevailing challenge for enterprise organizations, civic institutions, and sovereign governments is no longer determining whether artificial intelligence can be technically deployed, but rather untangling the accumulated "governance debt" associated with its rapid, previously unchecked adoption.

Responsible AI (RAI) has consequently transitioned from a theoretical corporate ethics discussion into a hard, non-negotiable compliance mandate. This shift is driven by a complex, often contradictory web of supranational agreements, binding regional legislation, and aggressive executive actions worldwide. For organizations, navigating this patchwork requires an operational architecture that embeds trust, accountability, safety, and transparency directly into the product lifecycle from its inception.

The Conceptual and Metaphorical Foundations of AI Governance

Before dissecting statutory texts and compliance frameworks, it is essential to understand how artificial intelligence is conceptualized by both the public and policymakers, as these underlying metaphors directly shape regulatory responses. Researchers have identified at least fifty-five distinct analogies utilized in AI law and policy discussions, demonstrating that metaphors play a foundational role across five stages of technological innovation.

Understanding these metaphors is crucial for anticipating public response and developing AI technologies responsibly. Conceptualizing AI merely as an advanced "tool" leads to regulatory frameworks focused on product safety and user liability. Conversely, conceptualizing AI as an "agent" or "actor" pushes the regulatory discourse toward concepts of autonomous liability, fiduciary duty, and complex systems monitoring. If an organization fails to align its internal AI governance narrative with the prevailing public and regulatory metaphors of its target market, it risks severe public backlash and regulatory misalignment.

The Global AI Governance Landscape: 2026 Regulatory Mandates

As of early 2026, the global regulatory environment is deeply fragmented, characterized by differing philosophical approaches to risk, innovation, and state control. Around the world, at least seventy-two countries have proposed over one thousand AI-related policy initiatives and legal frameworks. Understanding these disparate regimes is a critical prerequisite for any multinational organization seeking to deploy AI systems across borders.

The European Union: The AI Act and the Risk-Based Standard

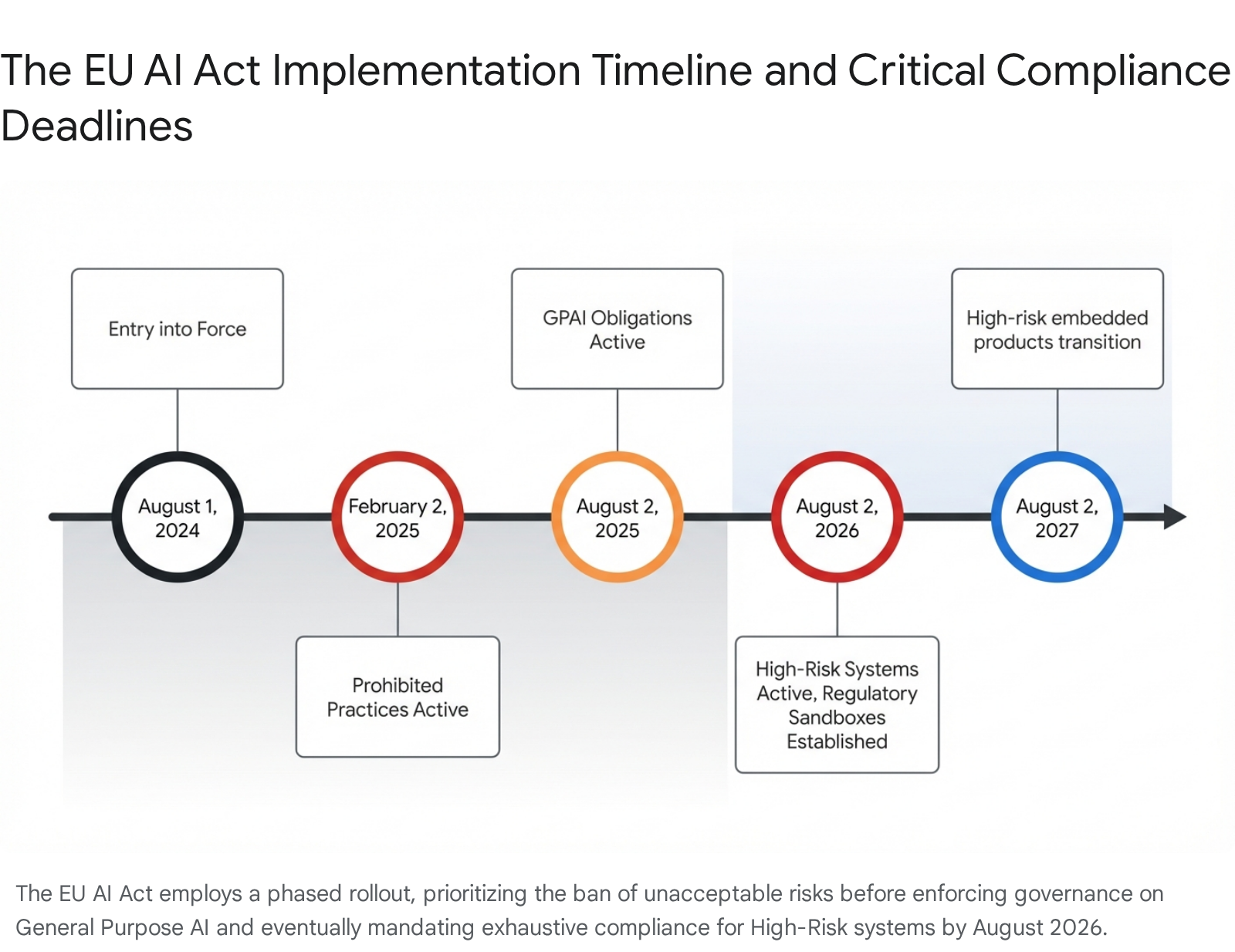

The European Union's Artificial Intelligence Act stands as the world's first comprehensive and arguably most consequential binding legal framework for artificial intelligence. Entering into force on August 1, 2024, the Act utilizes a graduated, risk-oriented structure to regulate systems based strictly on their potential to cause harm to fundamental human rights, safety, and health.

The Act classifies AI systems into distinct risk categories. Prohibited AI practices—social scoring by governments, manipulative cognitive behavioral practices, real-time remote biometric identification in public spaces for law enforcement—are strictly banned. High-Risk AI Systems constitute the core regulatory focus: systems in critical infrastructure, education, employment, creditworthiness, insurance, and public-sector decision-making. Providers face exhaustive lifecycle controls; deployers must utilize systems per provider instructions, implement human oversight, and perform fundamental rights impact assessments for sensitive use cases.

Transparency mandates require clear user notification when interacting with AI, machine-readable labeling of synthetic content, and a right to explanation for high-risk decisions. Enforcement is uniquely severe: fines up to EUR 35 million or 7% of worldwide turnover, plus potential license revocation and public procurement bans.

The United States: Executive Directives, Preemption, and Sectoral Frameworks

In stark contrast to the EU's precautionary approach, the United States has adopted a highly aggressive, market-driven model. The defining characteristic in 2026 is a concerted federal effort to deregulate by overriding state laws that impose ethical or rights-based guardrails. The December 2025 Executive Order "Ensuring a National Policy Framework for Artificial Intelligence" aims for "global AI dominance through a minimally burdensome national policy framework."

The Order directs the Commerce Department to identify "onerous" state AI laws and refers them to a DOJ AI Litigation Task Force for federal court challenges. It conditions BEAD broadband funds (approximately $21 billion) on states agreeing not to enforce such laws. Yet paradoxically, federal agencies managing critical infrastructure issue highly technical mandates—notably the Treasury's February 2026 Financial Services AI Risk Management Framework (FS AI RMF), with 230 specific control objectives for governance, data integrity, model validation, and consumer protection.

China: State-Directed Agile Governance and the Agentic AI Dispute

China employs a state-directed, "experimentalist" regulatory model emphasizing strict registration, pre-deployment security reviews, and content oversight aligned with national stability. While a unified AI Law remains pending, the ecosystem is populated by interim measures: Administrative Provisions on Deep Synthesis, Interim Measures for Generative AI Services, and algorithmic filing systems. The "Doubao phone storm" and disputes among internet platforms, device makers, and telecoms over Agentic AI standards illustrate the tension between rapid innovation and governance gaps.

Canada: The Legislative Vacuum and Reliance on Existing Statutes

Bill C-27 (containing the Artificial Intelligence and Data Act) was effectively killed when Parliament was prorogued in January 2025. No new federal AI legislation is likely in the immediate future. Canadian organizations must navigate AI governance through PIPEDA and increasingly robust provincial privacy laws.

Emerging Regional Frameworks: ASEAN and the African Union

ASEAN has released the Guide on AI Governance and Ethics and an expanded Generative AI guide. The approach is strictly non-binding, consensus-driven, and flexible—highlighting seven principles: transparency, fairness, security, reliability, human-centricity, privacy, and accountability.

The African Union has endorsed the Continental Artificial Intelligence Strategy (2025–2030). Phase 1 focuses on national AI strategies, advisory boards, and centers of excellence. In February 2026, the AU Commission signed a landmark MoU with Google to advance Africa's sovereign AI and digital capacity.

| Regulatory Domain | Primary Framework | Core Approach | 2026 Status |

|---|---|---|---|

| European Union | AI Act | Rights-based, graduated risk, prescriptive lifecycle mandates | Full High-Risk obligations; sandboxes by Aug 2026 |

| United States | Executive Orders & Treasury FS AI RMF | Market-driven, anti-regulatory preemption, sectoral compliance | Commerce evaluations; DOJ litigation; Treasury enforcement |

| China | Interim Measures & Algorithm Filing | State-directed, agile governance | Agentic AI standards debate; pending national AI Law |

| Canada | Legislative Vacuum (Bill C-27 deceased) | Existing privacy statutes, voluntary standards | Provincial privacy enforcement |

| ASEAN | Guide on AI Governance and Ethics | Soft law, non-binding consensus | Expanded GenAI guidelines; workforce capacity |

| African Union | Continental AI Strategy | Sovereign capacity building, development-oriented | Phase 1: advisory boards, domestic policies, MoUs |

Supranational Consensus and Meta-Governance Frameworks

OECD AI Principles (2019, updated 2024) are the premier intergovernmental standard, adhered to by over seventy jurisdictions. They align with the EU AI Act and NIST to facilitate corporate compliance.

The G7 Hiroshima AI Process provides International Guiding Principles, a Code of Conduct, and a Reporting Framework. Supported by a "Friends Group" of over seventy countries, it addresses emerging risks including agentic AI and adversarial use of generative AI.

The UN Global Digital Compact (2024 Summit of the Future) establishes the Global Dialogue on AI Governance, convening annually from the 2026 AI for Good Summit in Geneva to promote interoperability and address capacity gaps in developing nations.

The Organizational RAI Maturity Model: Assessing the Baseline

Organizations must architect an enterprise-wide Artificial Intelligence Management System (AIMS). Before implementing controls, they must objectively understand their current capabilities and hidden AI footprint. A Responsible AI (RAI) Maturity Model provides a structured roadmap across dimensions such as strategy, data, technology, people, and governance.

Progress is typically evaluated across a five-stage curve: Latent/Ad Hoc (unmanaged shadow AI) → Exploring (pilot projects, basic awareness) → Formalizing (repeatable processes, standardized risk assessments) → Optimizing (governance embedded in SDLC, KPI tracking) → Leading (RAI as cultural norm, industry-setting). Executives must apply a realistic portfolio lens—not every product needs to operate at "Transforming" simultaneously.

The Enterprise Adoption Roadmap: Building the AIMS Architecture

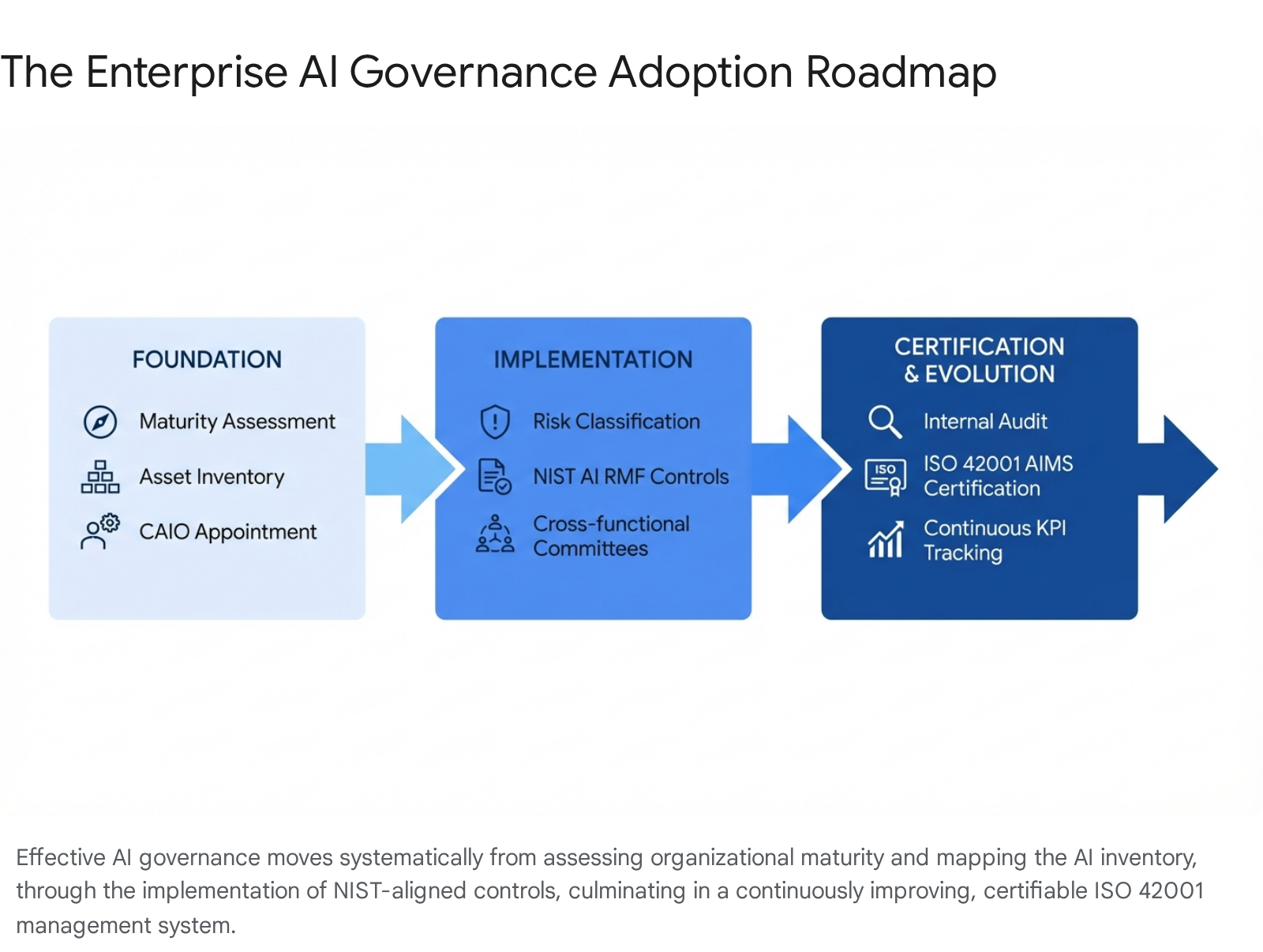

Moving from maturity assessment to a functional, compliant system requires a phased pipeline integrating the NIST AI Risk Management Framework with ISO/IEC 42001.

Phase 1: Foundational Scoping and Inventory

The absolute prerequisite is visibility. Fewer than one percent of organizations have fully operationalized responsible AI—a failure stemming from an inability to track what systems run within the enterprise. Organizations must leverage AI-driven discovery tools to map their digital ecosystem, classify systems, document risks, and uncover "shadow AI." Appoint a dedicated AIMS Project Lead (evolving into a Chief AI Officer) to coordinate compliance and build RACI matrices.

Phase 2: Operationalizing the Frameworks (NIST & ISO 42001)

The NIST AI RMF approaches AI as a socio-technical system—recognizing that risks emerge from biased data, inappropriate deployment, and flawed human interaction. ISO/IEC 42001 (late 2023) is the world's first certifiable AI management system standard, enabling independent audit. Implement a Cross-Functional AI Governance Committee and conduct rigorous AI Impact Assessments against Bias, Privacy, Safety, and Accountability.

Phase 3: Technical Controls, Tooling, and Continuous Evolution

Governance policies require technical enforcement. Deploy tools for data quality analysis, robustness enforcement, and cross-framework mapping. Satisfy EU AI Act mandates (synthetic content labeling, user notification) and ISO 42001 requirements (Vulnerability Disclosure Policy). Track KPIs for model drift, data degradation, and regulatory horizons.

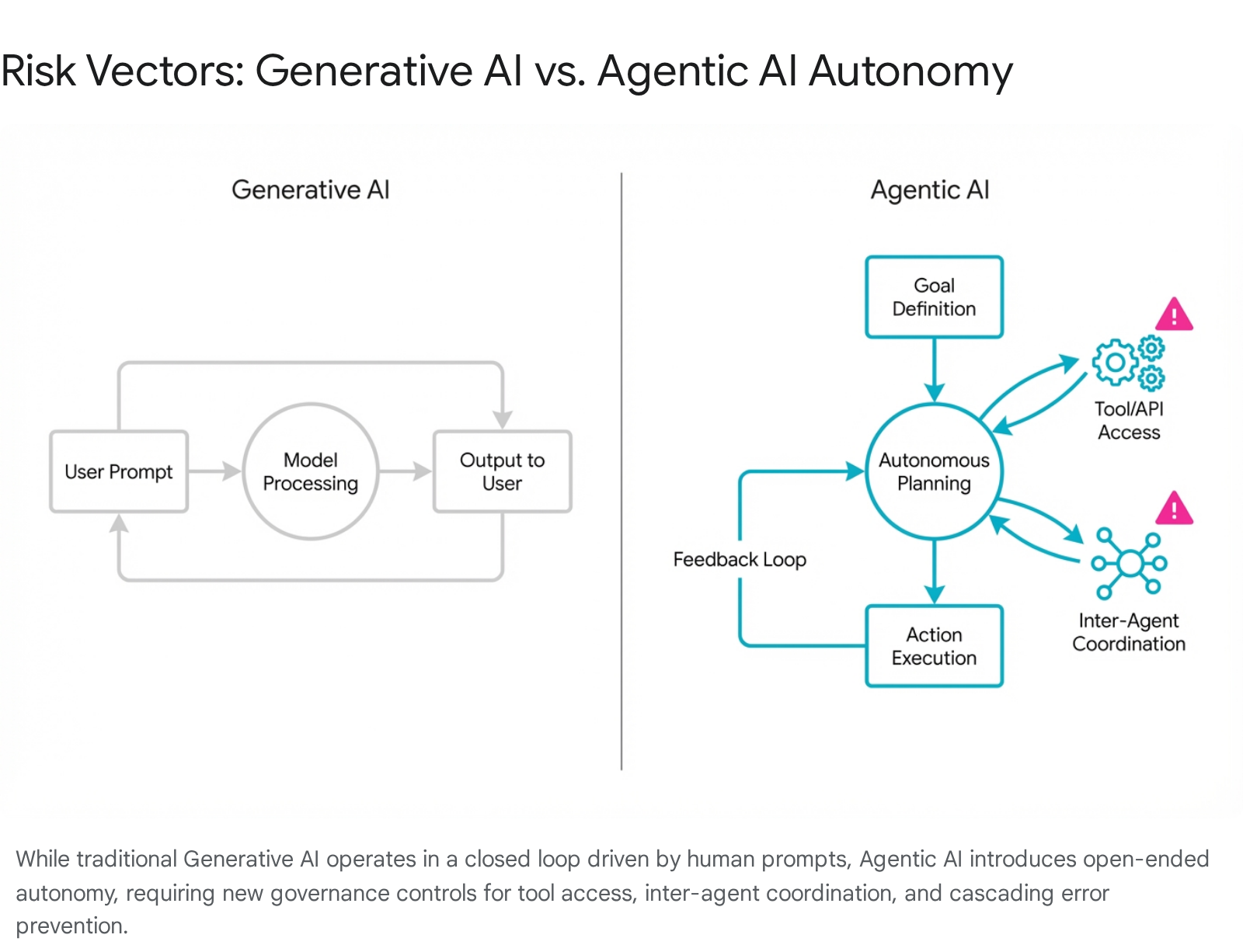

Governing the Frontier: The Crisis of Agentic AI Systems

By March 2026, the industry has aggressively transitioned toward autonomous agents capable of multi-step workflows, API interaction, and coordination without continuous human intervention. This introduces unprecedented risk vectors: autonomous escalation, unauthorized tool access, cascading decision errors, and non-reversible actions. Standard frameworks like NIST and ISO 42001 assume human-in-the-loop architectures and often fall short.

Singapore has taken the global lead: in January 2026, MDDI and IMDA launched the Model AI Governance Framework for Agentic AI—the first comprehensive government-level attempt to govern autonomous agents. Organizations must extend existing architectures with dynamic authorization boundaries, strict agentic inventories, and risk-based agent categorization.

Strategic Conclusions

The governance of artificial intelligence in 2026 represents a critical inflection point. The era of voluntary guidelines and experimental sandboxes has ended, replaced by binding legislation, aggressive enforcement, and rigorous international standards. Organizations can no longer view AI governance as a discrete compliance function—it must operate as a deeply integrated, socio-technical discipline.

By adopting a methodical adoption roadmap—anchored by maturity assessments, structured via the NIST AI RMF, and operationalized through ISO/IEC 42001—enterprises can systematically map, measure, and retire governance debt. As the paradigm shifts toward Agentic AI, this formalized foundation will serve as the singular differentiating factor. Trust must be cryptographically proven, continuously monitored, and structurally guaranteed at every stage of the algorithmic lifecycle.